Nonprofit organizations are constantly up against the challenge of doing more with less. With AI, NPOs are able to reduce costs and gain real insights into their donors. But the only way to get the full benefits of AI is with proper AI Governance in place. Sounds foreign? Then you're in the right place. Let's dive into everything you need to know and how to get started with AI governance for nonprofits to ensure your organization is getting the full benefits of its tech stack.

A poorly governed AI system doesn't just make mistakes, it makes them at scale, invisibly, and in your organization's name. A model that hallucinates grant eligibility criteria, misroutes a major gift prospect, or leaks PII from a beneficiary record doesn't give you a second chance to correct the record with that stakeholder.

The 3 Pillars of AI Governance: Your Framework Foundation

Most organizations treat AI governance as an IT project. It isn't. It's an organizational discipline that simultaneously touches data engineering, legal liability, communications standards, and program delivery. At Keyrus, we structure the work around three pillars — and in our experience, a gap in any one of them is sufficient to cause a failure in the other two.

People: The humans who build, use, challenge, and oversee your AI systems, structured around clear roles and a culture of accountability.

Process: The documented rules that govern what your AI can do, what it must refuse, and how its outputs are reviewed, audited, and updated over time.

Technology: The technical stack, including retrieval pipelines, automated guardrails, and data infrastructure, that enforces your policies at runtime.

Pillar 1: Process

Principles don't govern AI; processes do. There's a big difference between stating that your organization "values privacy" and defining, in writing, that no model output may include a beneficiary's name, address, or case ID without explicit retrieval authorization. Your process layer is the formal specification for model behavior: what it can retrieve, what it must refuse, how its outputs are reviewed, and who owns the decision when something goes wrong. Establishing a governance committee is critical for defining accountability processes for GenAI applications. This committee needs to determine how your organization will manage AI. How does your organization manage an AI application and the processes around it? What will happen if someone is interacting with AI and it says something crazy? While the chances of a random hallucination or situation like that happening are low, they are possible. That’s why when you’re establishing AI processes, human-in-the-loop is a critical component.

Monitor for model drift: Model drift is the gradual degradation of AI accuracy as the real world diverges from the data the model was trained on or grounded on. In a nonprofit context, this might mean a donor-scoring model that was calibrated during a strong fundraising year quietly underperforms after a market downturn, without triggering any visible error. Drift reviews should be scheduled at least quarterly, should compare current output distributions against a baseline, and should have a defined threshold that triggers retraining or re-grounding.

Document the full model lifecycle: Every stage, including data sourcing, preprocessing, model selection, fine-tuning, deployment, and eventual decommissioning, needs a documented owner and a retrievable audit trail. Model explainability isn't just a regulatory concept: it's the ability to reconstruct, from logs, why a specific AI output was generated. If a major donor asks why they received a particular email, or a regulator asks how a beneficiary decision was reached, "AI did it" won’t cut it.

Align with Frameworks, Regulations, and Guidelines before you have to: AI regulation varies not only by nation, but also by state in the US. In Europe, the GDPR and EU AI Act are the key regulation frameworks that organizations must abide by, and if you’re a global non-profit, those regulations must be considered when establishing your governance framework. In the US, the National Institute of Standards and Technology (NIST) introduced the AI Risk Management Framework (AI RMF). This is a voluntary, flexible guide for organizations to manage risks in designing, developing, and deploying AI systems. In Canada, the Artificial Intelligence and Data Act (AIDA) remains influential and focuses on transparency, risk, and accountability. Canada has also announced a similar voluntary AI pact called the Voluntary Code of Conduct on the Responsible Development and Management of Advanced Generative AI Systems. Organizations that sign onto this will voluntarily commit to identified measures for responsible AI use. It’s easier to design your systems with these regulations in mind from the start, rather than risk non-compliance and high fees.

Pillar 2: People

When you established your governance committee in the process pillar, you likely had conversations around the people and roles in the committee. Our people pillar somewhat overlaps with the process pillar, but there are distinctions. For people, you must determine and assign roles for your AI governance committee. This AI governance committee will be similar to your data governance committee, if you have one. This committee will need to make decisions on who can see what data, who has access, how you define product and product hierarchy, who handles hallucinations, and who sets security protocols. It’s also beneficial to think about what additional skill sets need to be added to your organization. You’ll want to go beyond technical skillsets (educational, ethical, etc.). Stewardship is key to this pillar being successful.

What this looks like in practice:

Establish a Red Team: Designate a small group, typically 3–5 people drawn from communications, program, and data teams, tasked with systematically attempting to break your AI before users do. This means probing for jailbreak vulnerabilities, testing whether restricted donor data can be surfaced through indirect prompts, and verifying that guardrail blocks actually fire. Red Team findings should feed directly into your model update cycle, not just a report.

Evolve your data roles: The generalist BI analyst profile, who is typically strong in SQL and dashboards, but limited in ML and cloud infrastructure, is insufficient for governing a production AI system. NPOs now need to write Python-based data pipelines, configure AWS or Azure cloud environments, and understand prompt engineering well enough to know when a model is behaving unexpectedly. If your organization can't hire for this profile, a consulting partner embedded during deployment is a practical alternative to leaving these gaps unfilled.

Treat AI literacy as a technical control: Staff who understand LLM failure modes are less likely to accept and propagate a confidently wrong output. A two-hour literacy session focused specifically on when not to trust the model is more valuable than a generic "AI awareness" training that your team can click through in 20 minutes. The goal is calibrated skepticism, not enthusiasm or fear.

Pillar 3: Technology

The third pillar of AI Governance is Technology. More and more technology companies are releasing out-of-the-box capabilities to manage AI hallucinations and alert/guard against misuse. There’s even new tech that addresses common AI issues to prevent problems. When establishing your AI governance, it’s important to take these technological aspects into consideration. Do you want to have security/preventative tech in addition to your AI?

Retrieval-Augmented Generation (RAG): RAG is the primary technical mechanism for eliminating hallucinations in nonprofit AI production. Instead of allowing a model to generate answers from its general training data, which has no knowledge of your programs, your policies, or your donors, RAG routes every query through a retrieval step that pulls relevant, verified documents from a controlled source (typically S3 buckets or an internal database) before the model generates a response. The model can only cite what it retrieves. Documents with outdated or incomplete metadata will surface in the wrong context; this is why data hygiene and RAG architecture must be designed together, not sequentially.

Automated guardrails: Guardrails are programmatic rules that intercept a query before the model responds and either block it entirely or route it to a pre-approved static response. Common configurations for nonprofits include blocking any query that requests financial or medical guidance, filtering outputs for PII before delivery, and restricting retrieval to documents tagged for the querying user's role. Amazon Bedrock Guardrails, for example, supports topic denial, PII redaction, and grounding checks as configurable policy layers, meaning your documented policies can be enforced at the infrastructure level rather than relying on the model's own judgment. Practically speaking, guardrails take the form of:

Deterministic filters that scan for credentials, social security numbers, or other sensitive data that can be found with simple search patterns. If those filters trap anything, they can either do an on-the-fly replacement or flag the issue for review

LLM-powered guidelines around tone, communication style, and acceptable outputs. They can be configured to modify the output to better suit the proper intent, or again, flag for review.

Targeted language models (small or large) that detect malicious input or output. These typically will simply deny the text from being presented to the user or the LLM.

Data maturity and metadata strategy: The most common reason a well-architected RAG system underperforms is poor document metadata. If your internal documents don't have consistent, accurate date stamps, author attribution, and subject tags, the retrieval layer cannot reliably surface the most current and relevant content. A grant policy updated six months ago but tagged with a two-year-old date will be ranked below an older version that happens to have better metadata. Data governance work is a prerequisite for RAG quality, not an afterthought.

What Governed AI Means Can Mean Your Organization

A solid governance foundation isn't a constraint on ambition; it's what makes ambitious use cases safe to deploy. The following represent applications we have either built for nonprofit clients or are actively scoping, all of which require the governance infrastructure described above to operate responsibly:

Pediatric Cancer Research Organization, Building the Data Foundation for AI: A nonprofit advancing pediatric cancer research had critical data fragmented across disconnected systems, making advanced analysis impossible before the infrastructure was fixed. Keyrus established a secure AWS foundation with standardized ingestion pipelines and a centralized data catalog, cutting dataset preparation time by 70% and delivering 3x faster turnaround for predictive models. The lesson: no RAG pipeline performs reliably on fragmented data. Governance and infrastructure must come first.

Professional Membership Organization, Eliminating Shadow Data: Departments were pulling from incompatible sources, such as Excel files, standalone dashboards, and separate databases, with no single system IT could consistently manage or trust. Keyrus consolidated our client’s six source systems into a unified AWS Redshift warehouse, reducing manual reporting time by 70% and delivering 3x faster access to insights. The result was the kind of metadata-clean, role-accessible data environment that any AI layer requires before it can operate responsibly.

Leading Medical Association, Fixing the Foundation Before Adding AI: The organization was approaching $1 million annually in Tableau licensing costs, running on flat, denormalized tables that couldn't support modern analytics workloads. Keyrus migrated our client to Power BI on Azure with dimensional modeling and autoscaling infrastructure, cutting operational and licensing costs by 85% and improving data model performance by 60%. AI systems inherit the structural problems of whatever data model sits beneath them. Rebuilding the foundation, not layering AI on top of a broken one, is the right sequence.

Rapid Donor Analytics, Governed AI for Fundraising, Deployed in Weeks: Most nonprofits can't afford a multi-year AI implementation, and shouldn't need one. Rapid Donor Analytics is a pre-configured solution built on AWS S3 and Salesforce Nonprofit Cloud that deploys in 4 to 6 weeks, giving small to mid-sized organizations real-time donor insights, AI-driven segmentation, and automated fundraising dashboards without requiring a large internal IT team. AI models score donors using historical giving trends to forecast behavior, identify high-value prospects, and power personalized outreach campaigns. The predictive donor scoring use case described in this article is ready to deploy. The governance infrastructure, clean data pipelines, role-based access, and team training are built into the engagement, not bolted on afterward.

Caution Ahead! AI Risks to Look and Prepare For

The nonprofit sector faces unique pressures that make AI governance not just important, but vital. Non-profits must safeguard public trust, protect vulnerable populations, and maintain strict compliance with donor requirements and regulatory frameworks. The organizations that struggle with AI adoption aren't failing because the technology doesn't work. They're failing because they didn't account for what responsible deployment actually requires.

Generative AI introduces three major risk categories that need to be governed:

Bias and Fairness Concerns: Large language models (LLMs) can amplify existing biases, potentially creating discriminatory content in outreach materials or program recommendations. For organizations serving vulnerable populations, this isn't just a PR problem; it's an ethical crisis.

Compliance Uncertainty: Non-profits operate under a complex web of regulations, from safeguarding requirements for youth programs to HIPAA-adjacent rules for health-focused organizations. Without clear governance, audit, and reporting frameworks, AI implementations become compliance landmines.

Data Privacy Risks: When AI models process donor information or beneficiary stories, any data leakage could violate regulations such as GDPR, state privacy laws, or donor trust agreements. The consequences aren't just financial; they're reputational disasters that can take years to recover from.

Outside of those general risk categories, don’t forget to define your goals. It’s great to have a goal of becoming an AI-driven organization, but what does that mean and how does it look? Does implementing a base LLM product fit the criteria you need, or do you want AI to be integrated into every part of your business, with agents running as many administrative tasks as possible? Before any model is built, you need a concrete plan of the requests you expect the system to handle, alongside documented examples of what an acceptable response looks like for each. Without this, there is no way to evaluate whether the model is performing well, no baseline to measure improvement against, and no shared definition of done. Defining your use cases with that level of specificity is tedious work, but it is the single most reliable predictor of a successful deployment.

And with defining goals comes defining expectations. It’s important to remember that most LLM applications you currently use are built, deployed, and tuned by massive companies with a vested interest in selling you more LLM usage. There is a lot going on behind the scenes that you won't get as part of the base LLM product. The ease of use you experience is the result of a massively tested and validated product. Your solution won't be at first. Be aware that the first few iterations are rough, but they improve quickly.

What Next? Governance as a Growth Engine

The most successful non-profits view AI governance not as a constraint, but as a competitive advantage. When donors, regulators, and partners see comprehensive controls protecting their interests, trust increases. Establishing this trust is crucial to addressing the elephant in the room that pops up in every AI Workshop we have conducted. AI for nonprofits can be a game-changer if you know how to properly govern it.

Even if you have the technical foundation, governance frameworks must reflect each organization's unique mission, values, and stakeholder needs. That's where strategic partnerships become invaluable, working with experts, like Keyrus, who understand both the technical possibilities, the governance principles, and the non-profit context.

Watch the replay of our webinar " Unlock NPO Growth with AI: Mastering Governance for Donor Insights," where we dive deeper into implementation strategies, share additional use cases, and provide a tactical checklist for getting started alongside FINCA International and AWS. Watch the replay here.

What is AI Governance?

AI Governance is a set of rules, standards, and policies put in place to ensure AI is being used properly, ethically, legally, and responsibly. It often manages risks such as bias and privacy breaches, while enabling innovation. AI governance ensures that AI technologies are developed, used and maintained in a way that maximizes outcomes and trust while keeping risks and security under control. In short, AI governance maximizes the benefits of your AI investments, whilst minimizing risks and potential harms. Accumulating more than 28 years of experience in data and artificial intelligence, Keyrus helps you to set-up the right AI governance to create competitive advantage from AI.

What are the 3 pillars of AI Governance?

At Keyrus, we believe the three (3) pillars of AI Governance are Process, People, and Technology. These pillars serve as a solid foundation for building your own AI governance policies and rules.

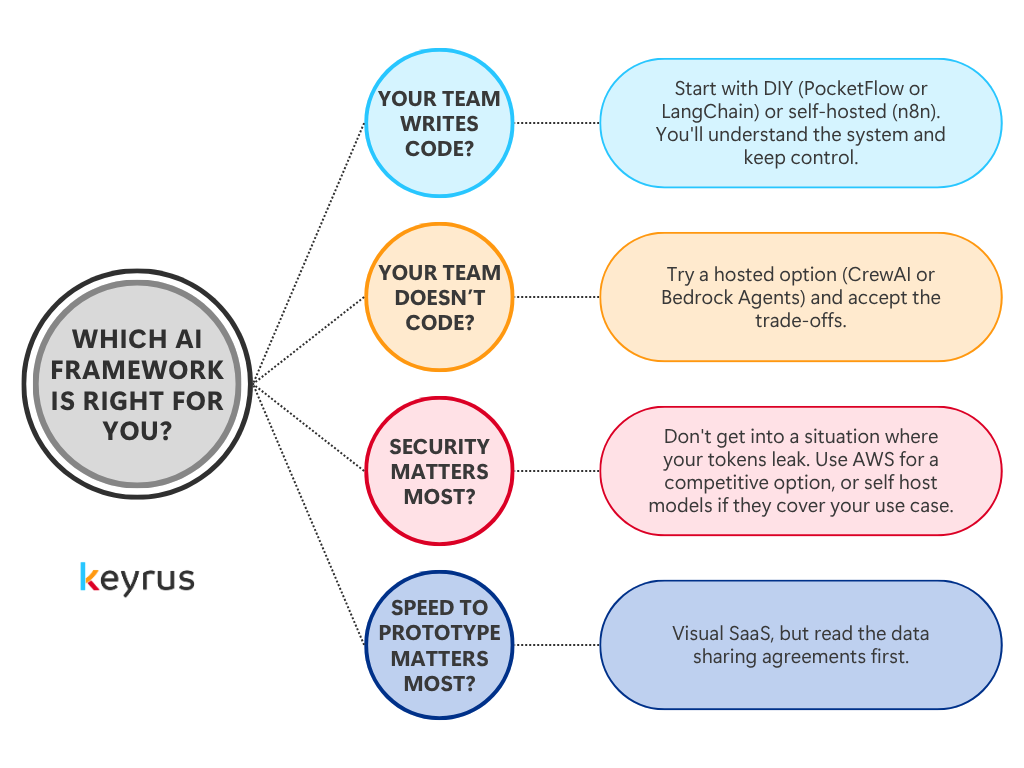

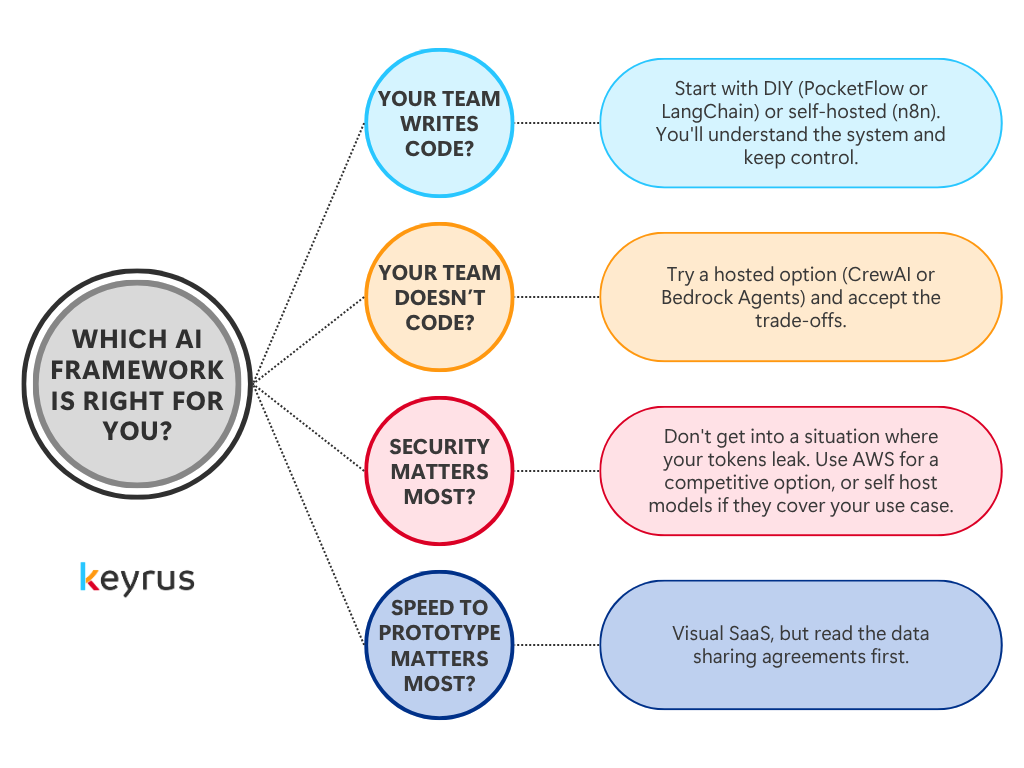

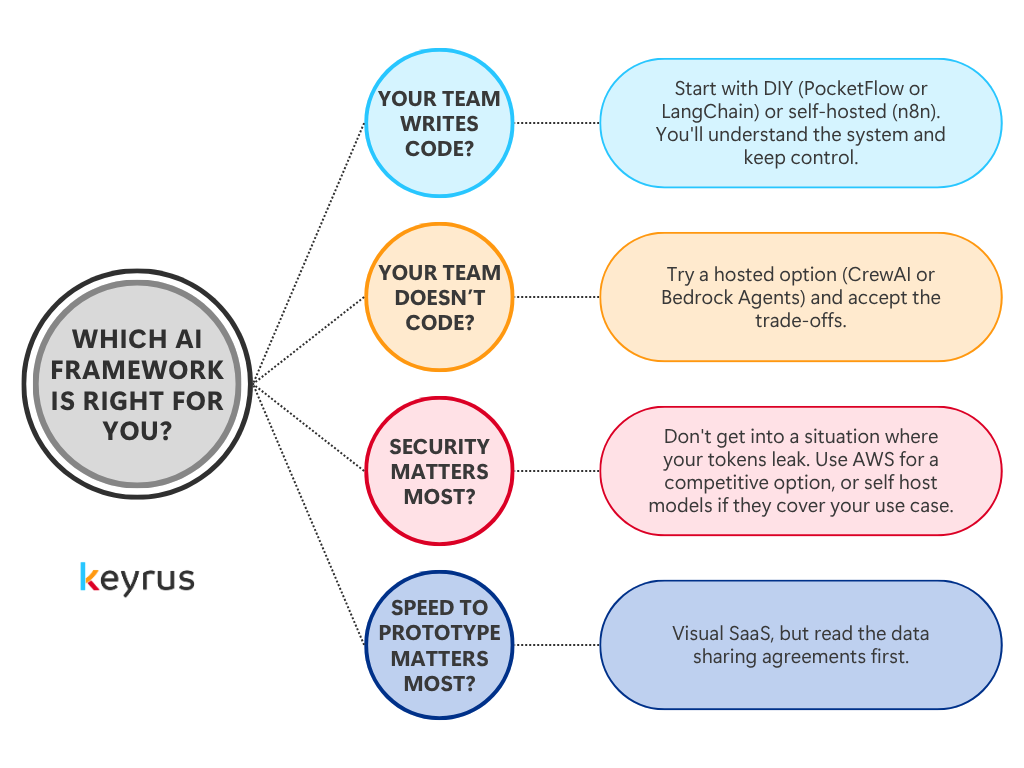

What should teams consider before choosing an AI framework?

Teams should evaluate four key factors before choosing an AI framework: whether it integrates with their internal data and tools, how it handles security and data access, whether the vendor trains on your data, and whether their team has the technical skills to build and maintain the solution.

What does AI Literacy look like in practice?

At Keyrus, our perspective is shaped by years of working at the intersection of data strategy and organizational change. We believe AI literacy initiatives are most effective when they: 1. Start with demystification, not technical depth. The goal is confidence and curiosity, not expertise. 2. Connect to real business context. Abstract concepts land when they're illustrated with examples relevant to participants' actual work. 3. Include hands-on engagement. Understanding how a machine learning model learns is more durable when people have trained one, even a simple one. 4. Address ethics and governance alongside capability. These aren't separate topics; they're part of the same conversation. 5. Are tailored by persona. Each level of your organization will have different needs than the others.

What is the Process pillar of AI Governance?

Establishing a governance committee is critical for defining accountability policies for GenAI applications. This committee needs to determine how your organization will manage AI. How does your organization manage an AI application and the processes around it? What will happen if someone is interacting with AI and it says something crazy? While the chances of a random hallucination or situation like that happening are low, they are possible. That’s why when you’re establishing AI processes, human-in-the-loop is a critical component.

What is the Technology pillar of AI Governance?

The third pillar of AI Governance is Technology. More and more technology companies are releasing out-of-the-box capabilities to manage AI hallucinations and alert/guard against misuse. There’s even new tech that addresses common AI issues to prevent problems. When establishing your AI governance, it’s important to take these technological aspects into consideration. Do you want to have a security/preventative tech in addition to your AI?

What is the People pillar of AI Governance?

When you established your governance committee in the process pillar, you likely had conversations around the people and roles in the committee. Our people pillar somewhat overlaps with the process pillar, but there are distinctions. For people, you must determine and assign roles for your AI governance committee. This AI governance committee will be similar to your data governance committee if you have one. This committee will need to make decisions on who can see what data, who has access, how you define product and product hierarchy, who handles hallucinations, and who is setting security protocols. It’s also beneficial to think about what additional skill sets need to be added to your organization. You’ll want to go beyond technical skillsets (educational, ethical, etc.). Stewardship is key to this pillar being successful.

How does AI Learn?

At its core, a machine learning model is a mathematical structure that adjusts itself based on data. During training, it's exposed to enormous volumes of examples and iteratively refines its internal parameters to minimize errors. What emerges is not a set of hard-coded rules, but a statistical model of relationships: the AI has learned patterns, not memorized answers.

Which AI agent framework is best for data privacy and security?

AWS Bedrock and self-hosted options like n8n offer the strongest data privacy guarantees. Unlike most SaaS tools, AWS Bedrock does not train on your data by default, and self-hosted frameworks keep your data entirely within your own infrastructure.

What is the best AI framework for non-technical teams?

Visual SaaS tools like CrewAI or AWS Bedrock Agents are the best starting point for non-technical teams. They offer no-code or low-code interfaces and fast setup, though teams should review data sharing agreements before committing.

What are LLM (Large Language Models)?

LLM (Large Language Models) AI models trained on vast quantities of text that can understand and generate human language. The technology behind tools like ChatGPT, Claude, and Microsoft Copilot.

What is ML (Machine Learning)?

ML (Machine Learning) A subset of AI where systems learn from data rather than being explicitly programmed. ML models improve with exposure; they find patterns and make predictions without being told the rules.

What does AI Literacy mean?

The term "AI literacy" gets used loosely, so it's worth being precise. AI literacy is the ability to understand, evaluate, and work with artificial intelligence in a way that is effective, purposeful, informed, and responsible. It has three interconnected dimensions: - #1- Conceptual understanding: Knowing what AI is, how it works at a meaningful (if not technical) level, what distinguishes different types of AI systems and how to select the right type of AI tools that would add value to the organization. - #2- Applied capability: Being able to identify where AI can add value in your work, how to interact with AI tools effectively, how to utilize it in a way that brings measurable results, and how to evaluate the outputs you receive. - #3- Ethical and governance awareness: Understanding the risks AI introduces, the biases it can carry, and the responsible practices that should govern its use.

What is AI (Artificial Intelligence)?

AI (Artificial Intelligence) The broad field of building machines that can perform tasks requiring human-like intelligence: reasoning, pattern recognition, decision-making, and language understanding.