In the world of Machine Learning, we frequently find that data cleaning and preprocessing require a significant portion of time—this is often quoted as being up to 80% of a data scientist’s time. Among these essential tasks, encoding stands out as a vital process. Encoding involves the transformation of categorical data into a numerical format, enabling algorithms to easily recognize and process the data. In this blog, we will explore a number of encoding techniques and understand the best scenarios for effective application.

One-Hot Encoding

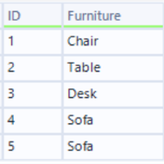

One-Hot Encoding takes a single categorical column and creates a separate binary column for each of the variables contained within. This works best with nominal data when categories have no identifiable order. However, when you have a large number of unique categorical variables, you will need to be careful using this technique as it could lead to a high dimensional representation, making the data more sparse and, therefore, more difficult to find patterns. This could lead to poor generalizations and risks of overfitting. The below example shows how the column ‘Furniture’ is split into binary columns for each individual item.

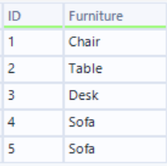

Input Data:

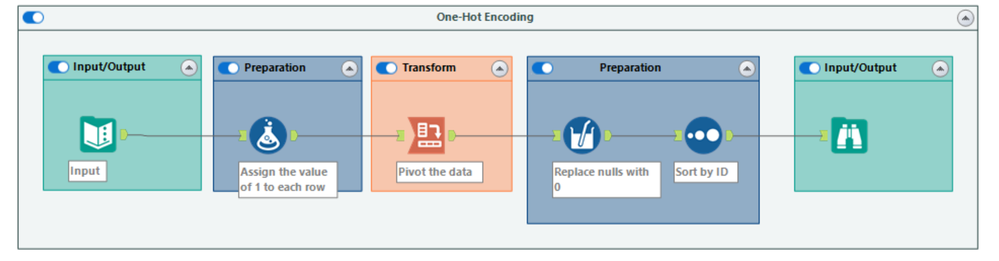

Workflow Overview:

First, we create a new value column and assign the numeric value of 1 to each row. Then, we can use the Cross Tab tool to pivot the Furniture column using the following configuration:

Furniture column for the column headers

The newly created value column from step 1 as the values for the new columns

Assuming we’re not also aggregating the data in this scenario, you’ll need to group by your unique identifier column

Select any method for aggregating the values, as we should only have one item per record when grouping by our unique identifier.

After this, all we need to do is replace any nulls with 0s and sort the rows back to their original order.

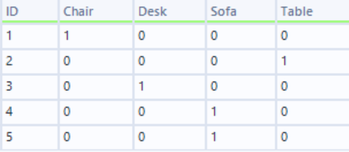

Final Outcome:

Label Encoding

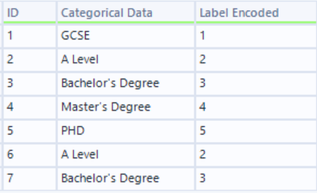

Label Encoding assigns a unique number to each category. This technique assumes an ordinal relationship between the categories, assuming a ranking or order to the categories. Education levels show a good use case for this technique due to the natural progression order. However, if we were to use this on our furniture example from One-Hot Encoding, there is no meaningful order; this would likely lead to incorrect assumptions in the machine

learning model. The below example shows education levels where the encoded number increases depending on the level obtained.

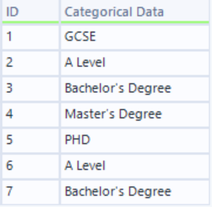

Input Data:

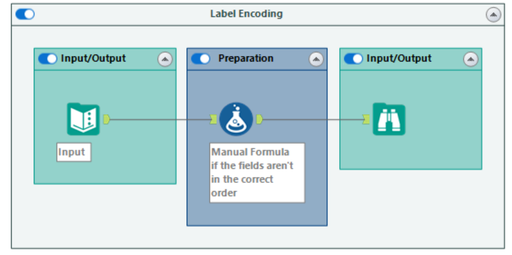

Workflow Overview:

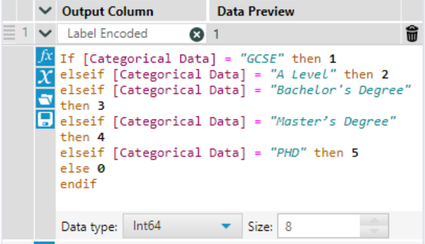

A simple Formula tool with a series of ‘IF Statements’ can be used to assign a new value to each categorical value. If dealing with a large number of categories, it would be a good idea to first use the Summarize tool and group by the categorical column to identify all possible values.

Formula Tool Overview:

An alternative solution could be to add a new input with your label-encoded value for each category and append it to your data input.

Final Outcome:

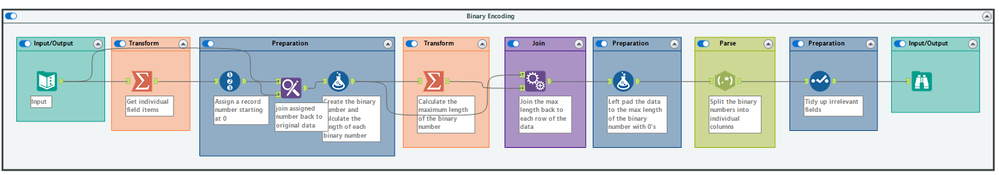

Binary Encoding

Binary Encoding assigns a binary number to each category. This is similar to One-Hot Encoding but can be more efficient by generating fewer features. As with One-Hot Encoding, this works best for nominal data. For the below example, we’ll look at our furniture column from One-Hot Encoding again.

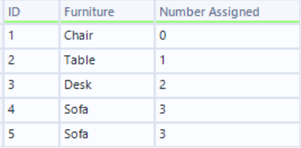

Input Data:

Workflow Overview:

We’ll start with getting a single list of the unique items using the Summarize tool. Then, assign a number to each of the categories using the Record ID tool before using the Find Replace tool to join the Record ID (Number Assigned) back to the original data:

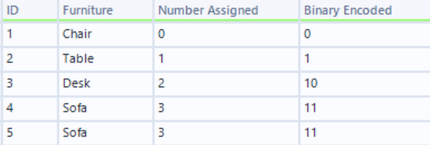

Next, we’ll use a Formula tool to assign the binary value to them, which in this case can be represented by 2 digits:

After this, we’ll need to calculate the maximum length of the binary column created and pad the left side of those that don’t meet that length with 0’s

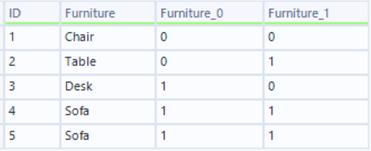

Next, we can drop the Number Assigned column and then split the Binary Number by digit using the RegEx tool to create the binary furniture columns for our final output:

Now, instead of the four separate columns that we’d get in One-Hot Encoding, we only have two. This can dramatically reduce the dimensionality, which can help avoid overfitting.

Target Encoding

Target Encoding or Mean Encoding calculates the mean value of your target variable for each category. This can be particularly useful when working with classification models. Let’s look at an example of customers on different subscriptions and whether they have used an offer.

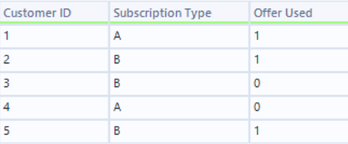

Input Data:

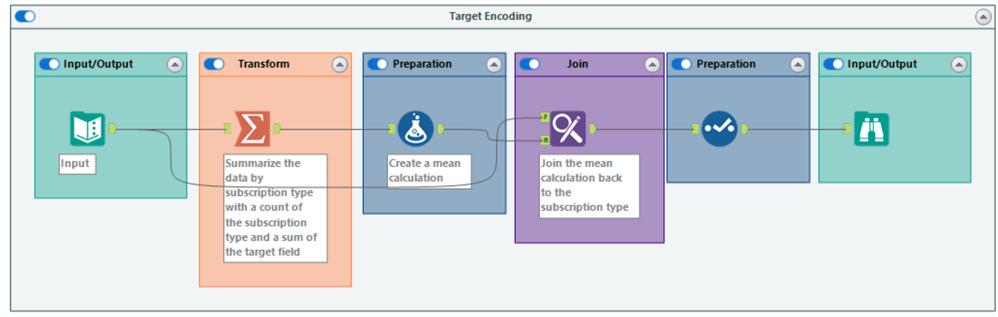

Workflow Overview:

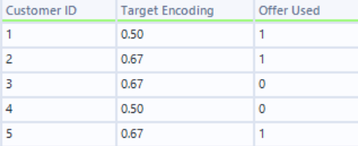

First, we’ll need to group by the Subscription Type, create a Count of each Subscription Type, and a Sum of our target variable, the ‘Offer Used’ column. Next, we calculate the mean of the target variable, in this case, ‘Offer Used’ for each subscription type:

Subscription Type = (Sum of ‘Offer Used’)/count of Subscription Type

A = (1+0) /2 = 0.5

B= (1+0+1)/3 = 0.67

Now, all we have to do is replace the subscription type with the mean:

Frequency Encoding

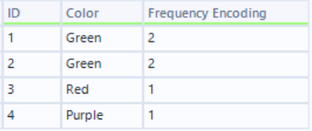

Frequency Encoding assigns a value based on a count of a category��’s occurrence in the data set. This technique should be used with caution, especially when the frequency of the categories is imbalanced. However, this can be resolved by normalizing the values after encoding. The below example shows the encoded frequency based on a color column.

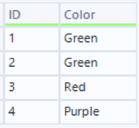

Input Data:

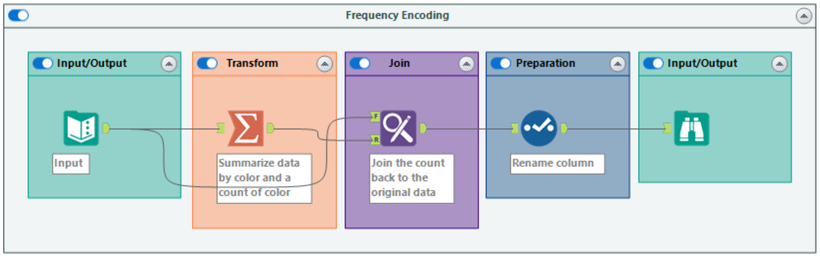

Workflow Overview:

First, we’ll use the Summarize tool to group by color and create a Count of the Color field.

All we need to do now is append the field back to our incoming data and rename it to your desired frequency encoding field name.

Final Output:

Things to Consider

As previously mentioned, the choice of encoding method will depend on whether your data is nominal or ordinal. For data that has a natural order or ranking, Label Encoding is the preferred method. However, if your data lacks a natural order, One-Hot Encoding or Binary Encoding are more suitable.

Exercise caution when using One-Hot Encoding, especially if your field contains a large number of unique categories. This can significantly increase dimensionality, leading to inefficient models and risk overfitting. In these cases, Binary Encoding or Target Encoding offer better alternatives.

Finally, if your target variable exhibits a strong relationship with a categorical field, Frequency Encoding may be a suitable option. It will be essential to review the overall category counts, as outliers can impact the accuracy of the model.

Conclusion

Encoding is extremely important when preparing your data for machine learning models. While certain algorithms can process categorical data directly, encoding still remains beneficial for feature engineering, enhancing the accuracy of models. Though trial and error will still play a part, the selection of encoding methods will ultimately hinge on the specific data and the requirements of the machine learning model. This blog aims to serve as a foundational guide, offering a starting point to understand these essential techniques.