Using Alteryx to Perform Data Dictionary Checks By Joseph Serpis, Keyrus Senior Consultant & Alteryx ACE Alteryx can help in this scenario by making the checks easier and faster using the data dictionary, which the client typically provides or approves with the format of the data they expect to receive.

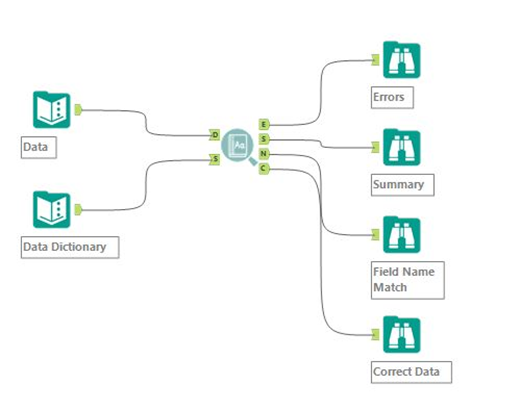

An Alteryx data dictionary macro can tackle this and will require two inputs the first being the data that is being tested, the second the Data Dictionary with the standards it will need check the data against.

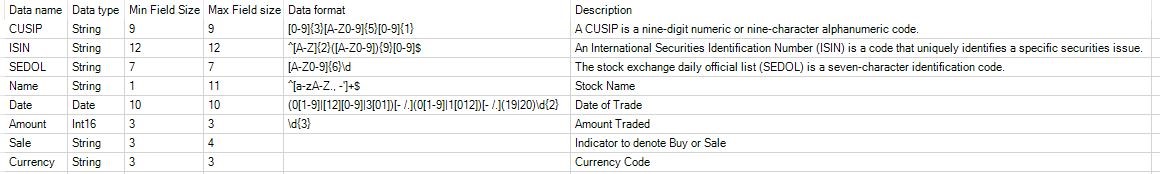

The Data Dictionary can be comprised of different fields to test the data. However in this scenario is comprised of 6 columns. What is particularly important is if the data needs to match a certain format to be valid, then Regex can greatly help. The Data format column has Regex to be used in the checking process (e.g. CUSIP where the nine characters must be either numeric or alphanumeric depending on the position). Using Regex in this way can enhance the data quality checking beyond checking the length of a field or the data type.

Closer look at the Macro The Macro can perform a number of checks to the validity of your data. This macro provides a detailed list of all the anomalies. It also provides a summary report of the total errors, performs a check on the column names, and also generates a list of all the data that correctly meets the standards defined in the Data Dictionary.

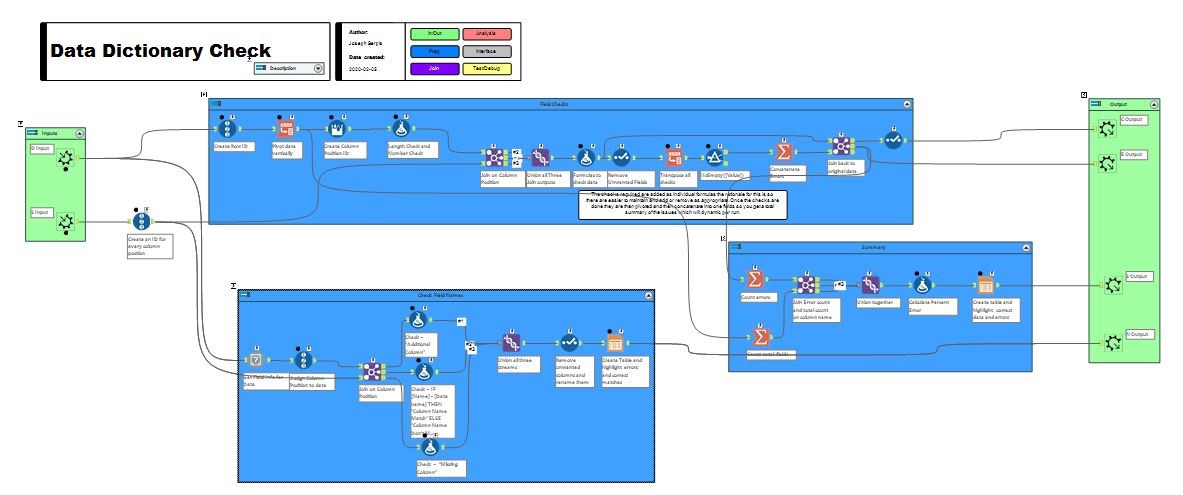

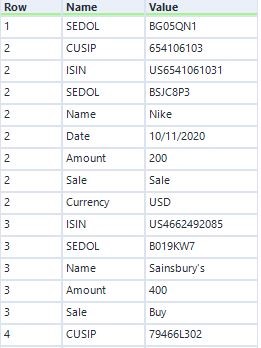

The section that checks the data quality works by pivoting the data vertically and joining with the Data Dictionary. Once this done the checks can be performed.

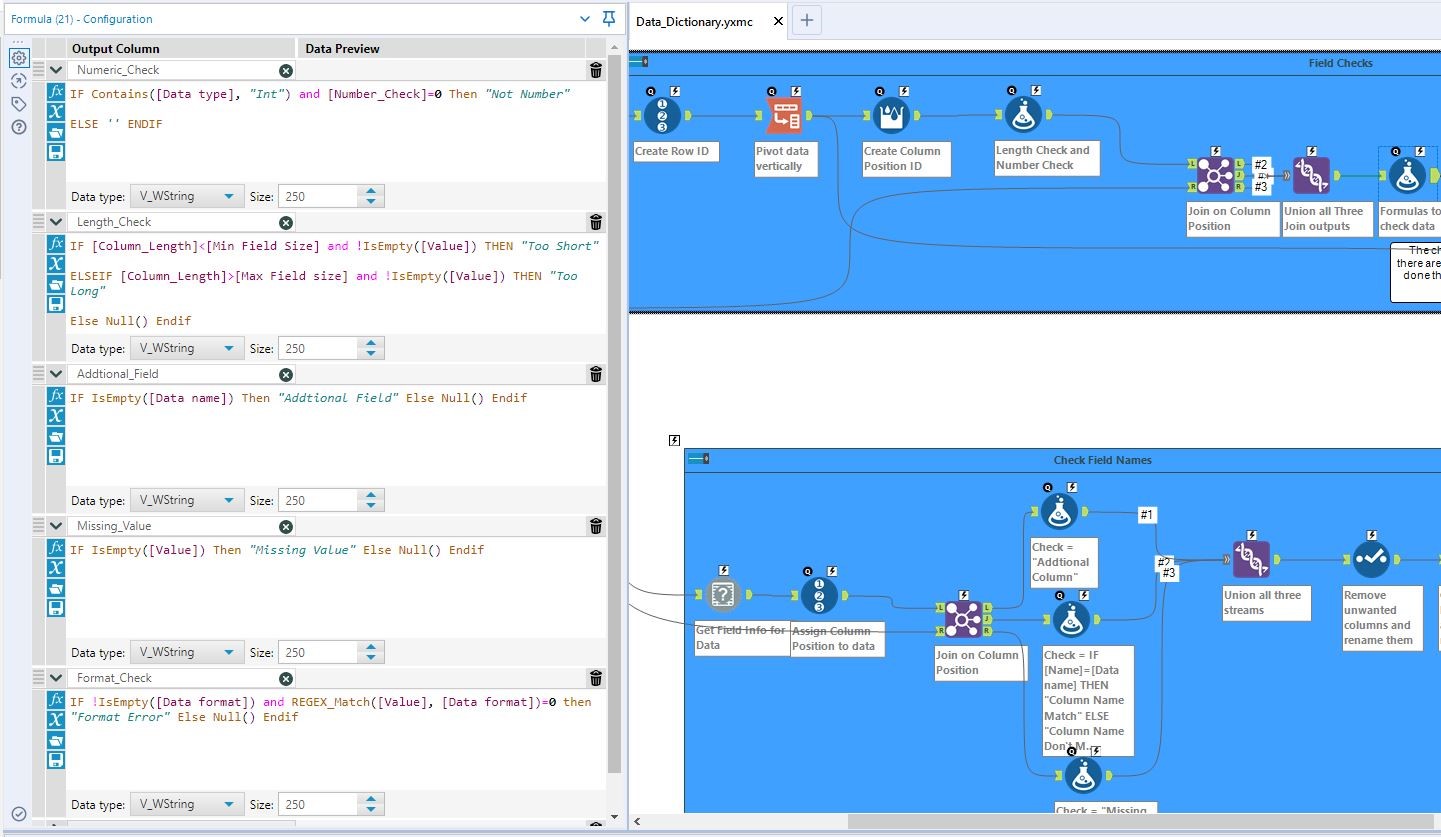

For ease of understanding and updating, the checks have been made into individual formulas, so they can easily be adjusted or added to. The regex defined in the Data Dictionary is used in the Format check and uses the function Regex match, to test the contents of the Value field matches the defined regex.

The remainder of this sections then pivots the checks vertically, so they can be concatenated into one field for each anomaly. The rationale for this is that the output will be dynamic and have listed all the issues the checks have found and not generate duplicates of the data.

The output from this section provides a detailed list of the anomalies identified and provides the column, row and value identified that needs to check in the original data. This can be sent to the producer of the data and should help them easily find the issues that have been identified.

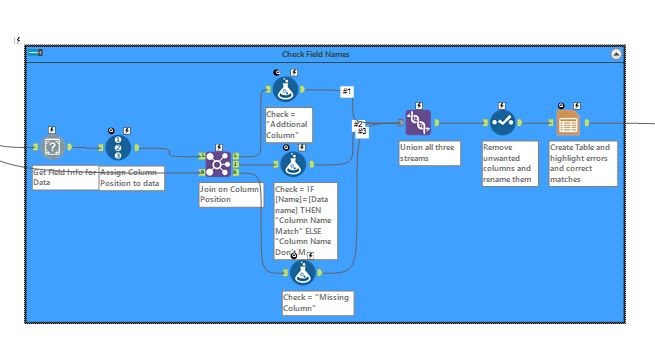

The check Field Names section checks if the Header names in the data match those from the Data Dictionary and also if they are in the correct position.

The output clearly highlights the field names that match the data dictionary and those that don’t match it.

The Summary sections counts all the anomalies and totals all the fields in the data, in order to calculate the percentage of errors across all of the data.

The summary report highlights the total percent errors across the entire dataset. This can be helpful to identify if a few records are causing the anomalies, or if a more significant problem is occurring across the data.

The fourth output of the macro produces a list of all the correct data, which matches the Data Dictionary. Depending on your requirements you could choose to process this data while you waited for the anomalies to be checked and reviewed.

Conclusion The benefits of creating a Data Dictionary macro in Alteryx is that it can easily identify any anomalies and ensure you remain compliant. The macro is repeatable and can be used to test on multiple versions of the same file and will consistently perform the same checks on the data being generated. Another benefit of creating a checking process like this in Alteryx is any changes that are requested can easily be modified and would not require changes in code, like if these checks were done outside of Alteryx using scripting tools.